So lately I’ve also deployed a trial version of Exchange 2016 in my lab.

This version of exchange brings with it a lot of improvements over the last version of Exchange that I had installed, which was Exchange 2010. Of particular note is the removal of the MAPI/CDO functionality. The reason this is noteworthy, is that VMware Data Protection (VDP), and presumably other backup solutions, made use of MAPI for their Granular Level Recovery (GLR) functionality. This means that VDP 6.1 doesn’t support the restore of an individual mailbox from Exchange 2016, even though the VDP plugin in vCenter can display the individual mailboxes present in a database backup (assuming you’ve installed and configured the Exchange backup agent appropriately).

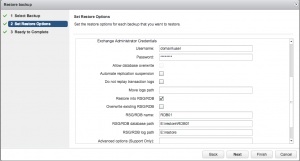

Of course, no implementation (or even test lab) of Exchange is complete without a tested backup and restore function, so I needed to explore other options. Overwriting the existing database during a restore is of no interest, since in The Real World one would never do that. I noticed there are a number of advanced options available when doing a restore of a whole database. I’d never seen these options before because I’d always tested a GLR type restore.

Having unticked “Restore to original location”, I entered the following advanced options in order to perform the restore.

To my surprise it worked first go, successfully restoring the database into a new Recovery Database (RDB) and mounting it. All that was left was to restore the individual mailbox from the RDB into the mailstore of my choosing. For that I turned to powershell, running the following command (adjusted for my test lab of course):

[PS] C:\Program Files\Microsoft\Exchange Server\V15\Scripts>New-MailboxRestoreRequest -SourceDatabase RDB01 -SourceStore Mailbox 'Nathan Test' -TargetMailbox administrator -TargetRootFolder Restore -AllowLegacyDNMismatch

Full disclosure, I found that particular command from this site. Thanks Mike!

I verified the status of the restore using the Get-MailboxRestoreRequest cmdlet. This successfully restored the contents of the mailbox “Nathan Test” into the mailbox owned by administrator, under a folder named restore.

Too easy!